Hacker News at SunsetHost: Identity Attacks Surge, Critical Vulnerabilities Expand, and the New Security Reality Comes Into Focus

Weekly Recap: A Consistent Pattern Across Modern Attacks

What stands out in the current cycle is not any single technique, but the convergence of tactics around one principle: operate within trust, not against it. Attackers are no longer prioritizing noisy exploitation paths when quieter, more reliable options exist. Instead of forcing entry, they are embedding themselves into legitimate workflows—software updates, browser activity, SaaS integrations, developer tools, and identity systems—where their actions inherit credibility by default.

This is why third-party tooling has become such a consistent entry point. Organizations depend on external services for hosting, deployment, analytics, payments, and collaboration. Each integration extends the trust boundary outward. When one of those components is compromised—whether through a supply chain incident, credential theft, or misconfiguration—it becomes a pre-authorized pathway into the environment. The attacker does not need to bypass controls; they are already inside them.

Browser-based activity is another critical layer in this pattern. The browser has effectively become the operating system for modern work—handling authentication, API access, file transfers, and application interaction. Malicious extensions, session hijacking, and token theft allow attackers to operate within that layer invisibly. Actions executed through a valid session—accessing dashboards, downloading data, initiating workflows—are indistinguishable from legitimate user behavior unless there is deep behavioral monitoring in place.

The same principle applies to trusted download paths. Software updates, package repositories, and file-sharing platforms are all designed to streamline access and reduce friction. Attackers exploit that design by inserting malicious payloads into otherwise legitimate distribution channels. A brief compromise of a repository or delivery mechanism can result in widespread exposure, particularly when organizations rely on automated updates or dependency management systems.

What ties all of these vectors together is plausibility. The activity does not look malicious because it aligns with expected behavior. A user logs in, accesses a service, downloads a file, or runs a tool—each step is individually valid. The attack emerges from the sequence and context, not from any single anomalous action. This is why traditional detection models struggle. They are tuned to identify known signatures or clearly malicious events, not subtle deviations within normal operations.

As a result, the defensive model must shift from prevention-centric to visibility-centric. Prevention still matters, but it cannot be the only control. Organizations need continuous insight into how identities are used, how systems interact, and how behavior changes over time. This includes monitoring for anomalies such as unusual access patterns, privilege escalation events, atypical data movement, and deviations from established baselines. Without that visibility, these attacks remain effectively invisible.

Speed is equally important. The dwell time between initial access and full compromise is often the only window available to contain an attack. Rapid detection and response capabilities—automated alerting, containment workflows, and incident response playbooks—are essential to reducing impact. The longer an attacker operates within a trusted context, the more damage they can do without detection.

Another key element is the shift in attacker economics. The most effective techniques today are not the most technically advanced—they are the most scalable and repeatable. Identity compromise, trusted application abuse, and exploitation of known vulnerabilities offer high success rates with relatively low effort. Attackers are optimizing for efficiency, not novelty. They do not need new methods when existing ones continue to work consistently across environments.

This has a direct implication for how organizations prioritize risk. Focusing heavily on rare, sophisticated threats while underinvesting in identity security, access control, and patch management creates an imbalance. The majority of successful intrusions are occurring through well-understood, well-documented pathways that remain insufficiently controlled.

The pattern is clear and persistent. Trust relationships—between users and systems, between organizations and vendors, between applications and data—are being leveraged as attack vectors. Every integration, every credential, and every automated workflow is a potential point of entry if not properly governed and monitored.

The organizations that adapt to this reality will reorient their security posture around how systems are actually used, not how they are theoretically protected. They will invest in identity-centric controls, behavioral analytics, and rapid response capabilities. Those that continue to rely primarily on perimeter defenses and static prevention models will remain misaligned with the threat landscape.

The attacks are not becoming more complex. They are becoming more believable—and that is what makes them effective.

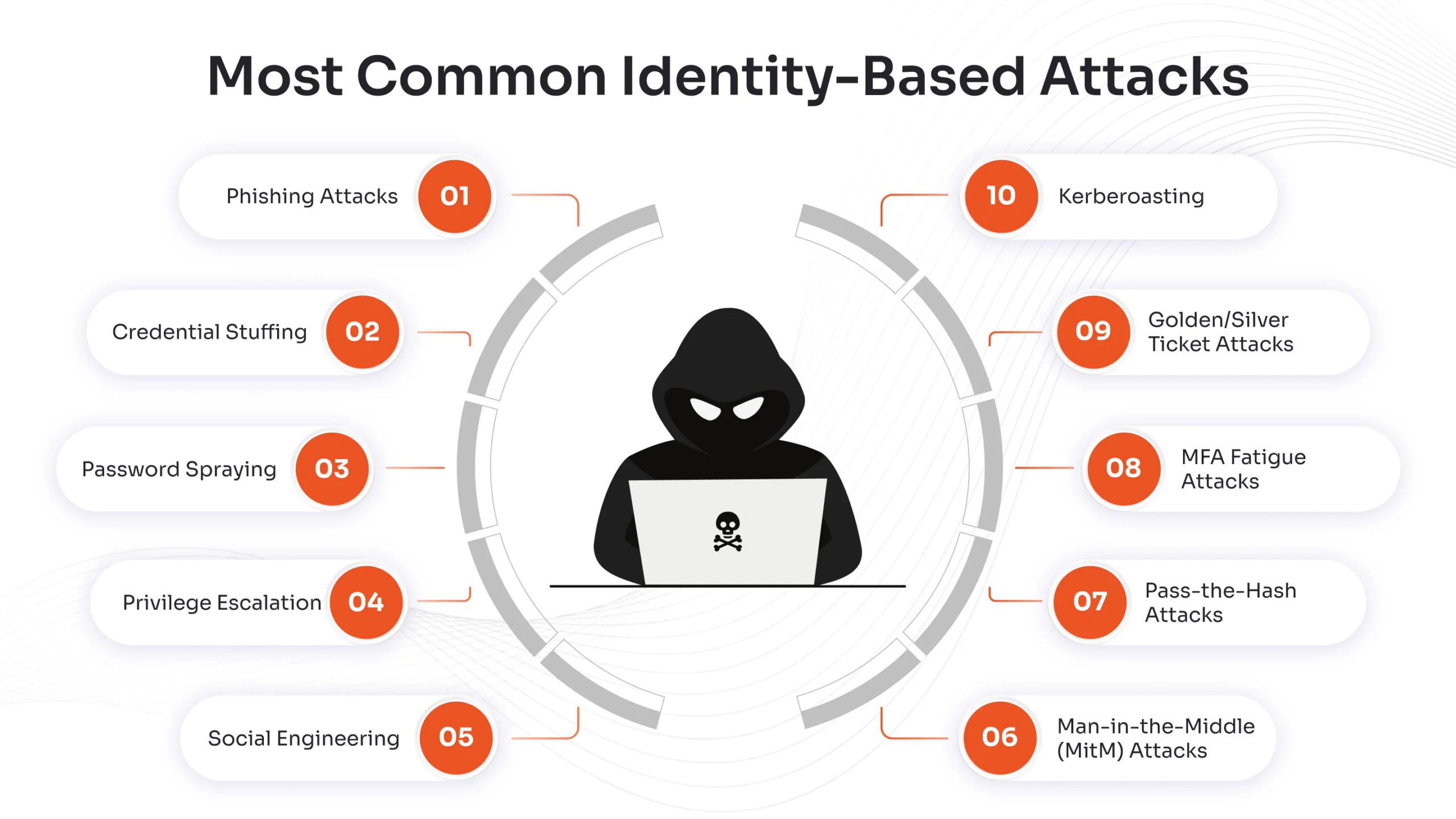

No Exploit Needed: Identity-Based Attacks Are Now the Primary Entry Point

What makes identity-driven intrusion so effective is not just that it works—it’s that it aligns perfectly with how modern infrastructure is built. Over the last decade, organizations have aggressively moved toward cloud-first architectures, SaaS platforms, federated identity systems, and remote access models. In that environment, authentication is no longer a gateway into the system—it is the system. When an attacker successfully impersonates a user, there is often no meaningful distinction between “external threat” and “internal activity.” The attacker is operating inside the trust boundary by design.

This is why identity attacks consistently outperform exploit-based techniques. Exploits require technical precision, version targeting, and often leave forensic artifacts. Identity compromise, by contrast, is low-friction and high-reliability. A single successful phishing campaign or token theft event can yield immediate access to email, file storage, internal applications, and even administrative consoles. From there, attackers expand quietly, often using built-in tools and legitimate APIs, which dramatically reduces the likelihood of detection.

The mechanics behind these attacks are also evolving. Traditional credential theft—usernames and passwords—is only one layer. More advanced campaigns now focus on session tokens, OAuth permissions, and authentication cookies. These artifacts allow attackers to bypass multi-factor authentication entirely, because they are effectively replaying a validated session rather than attempting a new login. In practical terms, this means even organizations with strong MFA adoption are still exposed if session management is not tightly controlled.

Another critical dimension is privilege escalation through identity sprawl. Most organizations operate with far more permissions than they realize. Users accumulate access over time—across SaaS tools, cloud environments, shared drives, and internal systems—without consistent auditing or revocation. When an attacker compromises a single identity, they often inherit a web of over-provisioned access rights. This turns a single compromised account into a pivot point for broader system control.

Lateral movement in identity-based attacks is also fundamentally different from traditional network-based movement. Instead of scanning ports or exploiting services, attackers query directories, enumerate permissions, and request access through legitimate workflows. They may send internal phishing messages from a trusted account, approve their own access requests, or leverage misconfigured roles in cloud environments. Every step looks like normal user behavior unless you are explicitly monitoring for anomalies at the identity layer.

Detection, therefore, becomes significantly more complex. Signature-based tools and perimeter defenses are largely ineffective because there is no malicious payload crossing the boundary. The signals that matter are behavioral—impossible travel events, unusual login times, atypical resource access, privilege elevation patterns, and deviations from established usage baselines. Organizations that are not instrumented to observe and analyze identity behavior in real time are effectively blind to this class of attack.

The operational implications are substantial. Security architecture must shift from infrastructure-centric controls to identity-centric controls. This includes enforcing strict conditional access policies, implementing least-privilege access models, continuously auditing permissions, and aggressively managing session lifecycles. Identity providers and access brokers are no longer administrative tools—they are critical security infrastructure.

It also requires a change in mindset. Identity is not just an authentication mechanism; it is a high-value target and a primary attack surface. Every login, every token, and every permission grant is a potential entry point. Organizations that continue to treat identity as a convenience layer—rather than as the core of their security model—will remain exposed to the most effective attack strategy currently in use.

This is why the statement holds: no exploit is needed. The system is not being broken into—it is being logged into.

NGate Malware Evolves: Trojanized Apps Weaponize NFC Payments

What makes this NGate iteration materially different is where it operates in the transaction lifecycle. Instead of trying to intercept data in transit or compromise backend systems, it embeds itself at the exact point where sensitive data is created, authenticated, and transmitted. That placement gives attackers first-party visibility into both the payment payload and the user’s authentication step, which is far more valuable than anything captured downstream.

NFC payments are designed around speed and implicit trust. The device, the app, and the user are all assumed to be legitimate at the moment of interaction. NGate exploits that assumption by positioning itself inside a trusted application flow—often a repackaged or tampered version of a legitimate payment utility. From the user’s perspective, nothing appears abnormal. The interface behaves correctly, the transaction proceeds as expected, and authentication is completed normally. Underneath that surface, however, the malware is capturing the NFC exchange and the PIN entry at the moment they occur.

This is a critical distinction. Intercepting NFC data alone has limited value without the corresponding authentication factor. By capturing both simultaneously, attackers gain the ability to reconstruct transactions, enable fraudulent payments, or extract reusable credentials depending on the payment scheme and downstream protections. In effect, the malware is not just observing the transaction—it is harvesting everything required to replicate or exploit it.

Technically, these attacks often rely on a combination of permission abuse and deep integration with Android system capabilities. Accessibility services are a common vector, allowing the malware to monitor screen content, observe user interactions, and capture input events such as PIN entry. Overlay techniques can be used to intercept or mimic input fields without raising suspicion. In parallel, NFC-related permissions and hooks allow the application to observe or relay communication between the device and the payment terminal. The result is a synchronized capture of both data streams.

What elevates the effectiveness of this approach is its alignment with legitimate user behavior. There is no need for aggressive exploitation, no visible disruption, and often no immediate indicators of compromise. The malware benefits from the user’s own actions—every transaction becomes a data collection event. This dramatically increases dwell time and the volume of sensitive data that can be harvested before detection.

Another important evolution is how these campaigns manage exfiltration and command infrastructure. Rather than relying on easily identifiable malicious servers, newer variants are increasingly using encrypted channels, legitimate cloud services, or transient infrastructure to move data. This reduces the effectiveness of traditional network-based detection and shifts the burden toward device-level telemetry and behavioral analysis.

The regional targeting seen in NGate campaigns is also deliberate. By focusing on specific markets and payment ecosystems, attackers can tailor the malware to local applications, banking integrations, and user habits. This increases success rates and reduces noise that might otherwise trigger broader detection. However, the underlying technique is not region-bound. Once validated, it can be adapted to other payment environments with minimal changes.

From a defensive standpoint, this class of attack exposes a fundamental gap in mobile security assumptions. Application legitimacy can no longer be inferred solely from appearance, branding, or distribution channel. Even trusted workflows—such as payments—must be treated as potential interception points. Controls need to extend into runtime behavior, permission usage, and anomaly detection at the device level.

It also forces a reassessment of how sensitive actions are validated. Relying exclusively on device-local authentication (such as a PIN entered into an app) is increasingly risky if that environment can be observed or manipulated. Stronger transaction verification models—such as out-of-band confirmation, hardware-backed secure elements, and tighter integration with trusted execution environments—become essential to mitigate this exposure.

The broader takeaway is that attackers are no longer trying to break mobile systems from the outside. They are embedding themselves within legitimate processes and extracting value from inside the trust boundary. In that context, the most dangerous assumption is that a familiar interface equates to a secure one.

AI Risk Is Not the Technology—It’s the Lack of Governance

The current risk profile around AI is being mischaracterized. The technology itself is not inherently destabilizing; the instability comes from how it is being deployed. Organizations are integrating AI into core workflows—customer support, code generation, analytics, decision support—without first establishing the control plane that defines how those systems should operate, what data they can access, and how their outputs are validated. That sequencing is backwards. High-impact systems are entering production before the rules that govern them exist.

At a structural level, AI introduces a new processing layer that sits between users and data. Unlike traditional software, which follows deterministic logic, AI systems interpret, transform, and generate outputs based on probabilistic models and dynamic context. That means the same input can produce different outputs depending on surrounding data, prompt structure, and system state. Without governance, this variability becomes a risk multiplier. You are not just managing access—you are managing behavior.

Data exposure is the most immediate concern. AI systems are often granted broad access to internal knowledge bases, documents, tickets, code repositories, and communication logs to improve relevance and utility. If access controls are not tightly scoped, the model can inadvertently surface sensitive information—customer records, financial data, intellectual property—through its responses. This is not a breach in the traditional sense; it is a byproduct of how the system was configured. The model is doing exactly what it was allowed to do.

Prompt injection and model manipulation add another layer of complexity. Because AI systems accept natural language as input, they are susceptible to adversarial instructions embedded in content they process—documents, emails, web pages, or user inputs. An attacker can craft inputs that override intended behavior, instruct the model to ignore safeguards, or extract restricted data. This is not exploitation of a code flaw; it is exploitation of the model’s instruction-following design. Without input validation and output constraints, the system becomes highly influenceable.

There is also a growing issue around tool and API integration. Many enterprise AI deployments are connected to external services—databases, ticketing systems, cloud resources, development environments—so they can take action, not just provide information. This is where risk escalates from data exposure to operational impact. If an AI system can create records, modify configurations, or trigger workflows, then any manipulation of that system can translate directly into unauthorized actions. Governance must define not just what the AI can see, but what it is allowed to do.

Identity and access management within AI systems is another underdeveloped area. In many implementations, the AI operates with a broad service identity that aggregates permissions across systems. When a user interacts with the AI, they may effectively inherit that expanded access indirectly. This creates a privilege amplification scenario where the AI becomes a proxy for elevated access, bypassing normal role-based controls. Without strict identity mapping and permission scoping, this becomes a significant internal risk.

Auditability and accountability are also materially different in AI-driven environments. Traditional systems log discrete actions tied to explicit commands. AI systems generate outputs through layered inference, making it harder to trace exactly why a decision or response occurred. If an AI system provides incorrect guidance, exposes sensitive data, or triggers an unintended action, organizations must be able to reconstruct the sequence of inputs, context, and model behavior that led to that outcome. Without this visibility, incident response and compliance become compromised.

The pace of deployment is amplifying all of these issues. Business units are adopting AI tools directly—often outside of centralized IT or security oversight—to gain efficiency advantages. This creates a fragmented environment where multiple AI systems operate with inconsistent controls, overlapping data access, and varying levels of risk exposure. Shadow AI is quickly becoming analogous to shadow IT, but with a far greater potential impact due to the systems’ ability to process and act on sensitive information.

Effective governance requires a deliberate framework that addresses these dimensions holistically. This includes strict data classification and access boundaries, enforced input and output controls, scoped permissions for integrated tools, continuous monitoring of model behavior, and clear policies for acceptable use. It also requires aligning AI systems with existing security controls rather than treating them as standalone tools.

The key point is that AI does not introduce entirely new categories of risk—it amplifies existing ones. Data leakage, unauthorized access, and system misuse have always been concerns. AI increases the speed, scale, and subtlety with which those risks can manifest. Without governance, organizations are not just exposed—they are operating systems they do not fully control or understand.

The gap between deployment and governance is where the risk lives. Closing that gap is now a primary security priority.

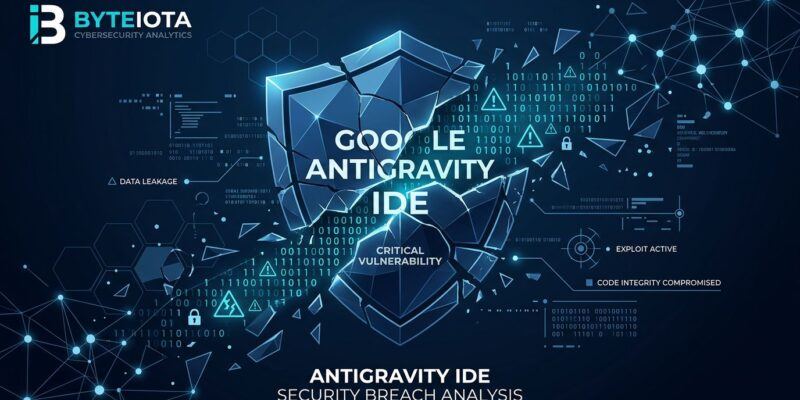

Antigravity IDE Vulnerability: AI Development Tools Expand the Attack Surface

What distinguishes this latest NGate evolution is not just its technical capability, but its strategic positioning inside the transaction flow itself. Instead of attempting to intercept data at the network level or through brute-force compromise, the malware inserts itself directly into the trusted application layer where sensitive financial interactions are already occurring. This is a far more efficient attack model because it eliminates friction. The user willingly initiates the transaction, authenticates themselves, and provides the exact data the attacker needs—all within what appears to be a legitimate environment.

Near-field communication (NFC) is particularly attractive in this context because of its design assumptions. NFC-based payments are built around proximity and trust. The expectation is that if a device is physically close enough to complete a transaction, and the user has authenticated with a PIN or biometric, the transaction can be considered valid. NGate exploits this assumption by capturing both the transmitted NFC data and the authentication inputs at the moment they are generated. This effectively allows attackers to clone or replay transaction data, or extract sensitive credentials that can be used for downstream fraud.

The use of a trojanized legitimate application is the critical enabler here. By embedding malicious code within a trusted payment interface—such as a repurposed version of a widely used app—attackers bypass the primary barrier to mobile compromise: user skepticism. There is no suspicious sideloaded APK in the traditional sense, no obvious red flags in the interface, and no immediate performance anomalies. The application behaves as expected on the surface, while quietly exfiltrating data in parallel. This duality significantly extends dwell time, allowing attackers to collect multiple transactions and credentials before detection.

From a technical standpoint, these attacks often leverage accessibility services, overlay techniques, and deep integration with Android’s permission model. Accessibility APIs, originally designed to assist users with disabilities, can be abused to monitor screen activity, capture keystrokes, and interact with UI elements programmatically. Overlay attacks can present invisible or near-invisible layers that intercept user input, including PIN entry. When combined with NFC interception capabilities, the attacker gains full visibility into both the data being transmitted and the credentials used to authorize it.

Another important dimension is the shift away from obviously malicious infrastructure. Earlier iterations of mobile malware relied heavily on command-and-control servers that could be identified and blocked. Modern campaigns are increasingly using encrypted channels, legitimate cloud services, or even decentralized communication methods to exfiltrate data. This makes network-level detection significantly more difficult and pushes the burden of defense further onto endpoint monitoring and behavioral analysis.

The geographic targeting of campaigns like this is also deliberate. By focusing on specific regions and payment ecosystems, attackers can tailor their malware to local banking applications, transaction standards, and user behaviors. This increases success rates and reduces the likelihood of immediate global detection. However, the underlying technique is portable. Once proven effective in one region, it can be adapted quickly to others with minimal modification.

What this ultimately reveals is a broader shift in mobile threat strategy. Attackers are no longer trying to break the system—they are integrating into it. By aligning themselves with legitimate user workflows, they reduce the need for exploitation and increase the probability of success. This is why traditional defenses—app store vetting, signature-based detection, and user awareness—are no longer sufficient on their own.

Defending against this class of attack requires a layered approach that includes runtime application self-protection, strict permission auditing, anomaly detection at the device level, and stronger controls around financial transaction verification. It also requires rethinking the trust model for mobile applications. The presence of an app on a device, even one that appears legitimate, can no longer be treated as an implicit guarantee of safety.

In this environment, trust is no longer a given—it is an attack vector.

CISA Adds 8 Actively Exploited Vulnerabilities to KEV Catalog

When the Cybersecurity and Infrastructure Security Agency adds vulnerabilities to its Known Exploited Vulnerabilities (KEV) catalog, it is not issuing a theoretical advisory—it is documenting confirmed, real-world exploitation. That distinction matters. The KEV list is not a prediction model or a research backlog; it is a prioritized dataset of vulnerabilities that attackers are already using successfully in active campaigns. Inclusion on that list effectively compresses the risk timeline to zero. There is no “window” to assess or debate severity. The exposure is immediate.

What makes KEV entries particularly significant is their operational relevance. These vulnerabilities are not obscure edge cases buried in niche systems. They are typically found in widely deployed enterprise technologies—network infrastructure, remote access platforms, web services, and management interfaces that form the backbone of organizational IT environments. That ubiquity is precisely why they are targeted. Attackers prioritize vulnerabilities that maximize reach with minimal effort, and KEV entries represent the intersection of accessibility, reliability, and impact.

The addition of eight new vulnerabilities in a single update reinforces a persistent trend: exploitation is scaling faster than remediation. Threat actors are industrializing their workflows, automating scanning and exploitation across large address spaces, and integrating newly disclosed vulnerabilities into their toolkits almost immediately. In many cases, exploitation begins within hours or days of public disclosure. By the time a vulnerability is formally recognized and added to KEV, attackers have often already established footholds in unpatched environments.

This dynamic exposes a structural weakness in how many organizations approach patch management. Traditional models treat patching as a scheduled operational task—monthly cycles, maintenance windows, staged rollouts. That cadence is misaligned with the current threat environment. When a vulnerability is actively exploited, delay is not neutral—it is cumulative risk. Every unpatched system represents a known, accessible entry point that adversaries are actively probing.

Another critical factor is the role of these vulnerabilities in broader attack chains. Rarely are they the end objective. Instead, they function as initial access vectors that enable subsequent stages—credential harvesting, privilege escalation, lateral movement, and data exfiltration. A single unpatched vulnerability can serve as the opening move in a multi-stage intrusion that ultimately compromises an entire environment. This is why KEV entries carry disproportionate weight relative to their individual technical details.

The federal remediation timelines attached to KEV updates are also instructive. They are not arbitrary deadlines; they are calibrated responses to active threat intelligence. While these mandates apply directly to federal agencies, they serve as a benchmark for the private sector. Organizations operating outside that framework should interpret these timelines as best-practice thresholds for risk containment, not optional guidance.

There is also a visibility challenge that compounds the issue. Many organizations do not have a complete or continuously updated inventory of their assets, particularly in hybrid and cloud environments. Without accurate asset visibility, it becomes difficult to determine whether a given vulnerability is present, let alone prioritize its remediation. This creates blind spots where exploitable systems remain exposed simply because they are not fully accounted for.

From a defensive standpoint, this elevates the importance of vulnerability prioritization over volume-based patching. Not all vulnerabilities carry equal risk, and KEV provides a clear signal for where attention should be focused. Security teams must align their remediation efforts with active threat intelligence, ensuring that resources are directed toward vulnerabilities that are actually being exploited rather than those that are merely theoretical.

The broader implication is that patch management has transitioned from a maintenance function to a core security discipline. It requires real-time intelligence integration, rapid decision-making, and cross-functional coordination between security, operations, and engineering teams. Automation plays a critical role here, but it must be paired with clear prioritization logic and accountability.

Ultimately, KEV updates redefine urgency. They eliminate ambiguity about whether a vulnerability matters and replace it with a simple operational reality: attackers are already using this, and any delay in remediation directly increases the likelihood of compromise. Organizations that internalize this shift and adapt their processes accordingly will reduce their exposure. Those that continue to operate on legacy patching cycles will remain predictably vulnerable to the most widely exploited attack paths in the current threat landscape.

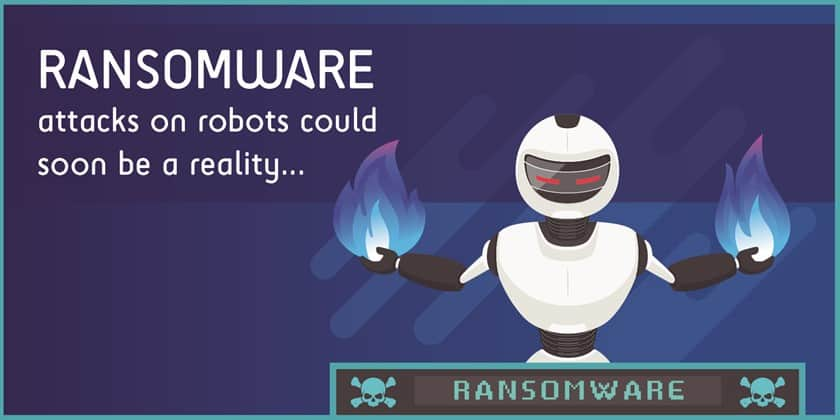

Ransomware Reality: Backups Alone Are No Longer Enough

The longstanding assumption in enterprise resilience planning has been straightforward: if everything fails, restore from backup. That assumption is now systematically being dismantled. Modern ransomware operations are not opportunistic smash-and-grab events—they are structured, multi-stage intrusions where backup infrastructure is identified, accessed, and neutralized before encryption is triggered. By the time the ransomware payload executes, the recovery path has already been degraded or eliminated.

Attackers understand that backups are the single most effective countermeasure against ransomware. As a result, they treat them as a primary target, not an afterthought. Once initial access is achieved—often through identity compromise or an unpatched vulnerability—the attacker’s first objective is reconnaissance. They map the environment, identify storage systems, locate backup repositories, and determine how those systems are managed and accessed. If backup systems are reachable using standard credentials or are integrated into the same network domain, they become immediately vulnerable.

From there, the attack shifts to control. Backup catalogs may be deleted, retention policies altered, snapshots removed, and replication processes disrupted. In some cases, attackers introduce silent corruption—modifying or encrypting backup data in a way that is not immediately detected. This ensures that even if restoration is attempted, the recovered data is incomplete or unusable. The goal is not just to encrypt production systems, but to eliminate confidence in recovery.

Timing is a critical component of this strategy. Many ransomware groups operate with a dwell time measured in days or weeks. During this period, they maintain persistence, escalate privileges, and systematically weaken defenses. Backups created during this window may already contain compromised or staged data, rendering them ineffective. When the final encryption phase begins, organizations often discover that their “clean” restore points are either too old to be viable or already tainted.

This is why the concept of backup immutability has become central. Immutable backups are designed so that once data is written, it cannot be altered or deleted within a defined retention period—even by administrators. This breaks the attacker’s ability to tamper with recovery points after gaining access. However, immutability alone is not sufficient if the backup system itself is accessible through the same identity plane as the rest of the environment. If an attacker can control the account that manages the immutable system, they may still be able to disrupt operations or prevent restoration.

Segmentation is the next critical layer. Backup infrastructure must be logically and, ideally, physically isolated from production environments. This includes separate authentication domains, restricted network pathways, and limited administrative overlap. The objective is to ensure that a compromise in the primary environment does not automatically extend to backup systems. Without segmentation, backups are simply another asset within the same attack surface.

Continuous validation addresses a different but equally important risk: false confidence. Many organizations assume their backups are functional without regularly testing restoration under realistic conditions. In a ransomware scenario, the ability to restore quickly and accurately is the difference between operational recovery and prolonged downtime. Validation must include not just data integrity checks, but full recovery drills that simulate real-world failure conditions. Anything less leaves critical gaps untested.

Detection and response speed also play a decisive role. The earlier an intrusion is identified, the greater the likelihood that clean, uncompromised backups still exist. This requires monitoring not just for encryption activity, but for the precursor behaviors—unusual access to backup systems, changes in retention policies, mass deletion events, or anomalous administrative actions. By the time encryption begins, the opportunity to preserve recovery options may already be gone.

There is also a financial dimension that cannot be ignored. Ransomware operators increasingly rely on the assumption that organizations will pay if recovery is not viable. By targeting backups, they are directly increasing the probability of payment. This transforms backup security from a technical concern into a business-critical control with direct implications for financial exposure, regulatory compliance, and reputational risk.

The broader shift is clear. Backups are no longer a passive safety net—they are an active component of the security architecture that must be hardened, monitored, and continuously validated. Treating them as a secondary system or a compliance checkbox is no longer viable.

In the current threat environment, a backup strategy that is not explicitly designed to withstand adversarial interference is functionally equivalent to not having one at all.

Critical SGLang Vulnerability Enables Remote Code Execution

What elevates this SGLang issue from a routine vulnerability to a systemic concern is the way it reframes model artifacts. In many environments, model files—weights, serialized graphs, tokenizer configs, and packaging formats like GGUF—are treated as inert data. In practice, they are tightly coupled to parsers, loaders, and runtime hooks that execute complex logic during deserialization and initialization. CVE-2026-5760 exploits that boundary: by crafting a malicious model file that abuses how SGLang ingests and processes model data, an attacker can trigger remote code execution (RCE) at load time.

This is not an edge case; it’s a design pressure point. Modern AI stacks routinely pull models from external repositories, mirrors, or internal registries, then load them automatically as part of inference services, batch jobs, or developer workflows. If the loader trusts the file format implicitly—failing to enforce strict schema validation, bounds checking, and safe deserialization—the model becomes an execution vector. The attack doesn’t require a network exploit or privileged access; it rides the normal workflow of “download → load → run.”

The GGUF angle is particularly instructive. Formats designed for performance and portability often embed metadata, quantization parameters, and layout instructions that the runtime must interpret. If that interpretation path includes unsafe memory handling, dynamic allocation without constraints, or execution of embedded instructions/macros, a malicious file can pivot from “data” to “payload.” In practical terms, the attacker is weaponizing the model-loading pipeline itself.

The impact profile of an RCE in an AI runtime is broad. Many SGLang deployments run alongside GPUs, access local caches, connect to model registries, and integrate with orchestration layers. Once code execution is achieved, attackers can:

- Extract API keys, tokens, or credentials present in environment variables or config files

- Modify inference logic or inject backdoors into served models

- Pivot laterally to adjacent services within the same container/VM or cluster

- Tamper with outputs (data integrity attacks) without obvious system-level indicators

What makes this class of vulnerability especially dangerous is how it blends into legitimate operations. Loading a model is a routine action. If the compromise occurs during that step, there may be no anomalous network signature or exploit traffic to flag. The “trigger” is the file itself, and the execution path is part of normal startup or inference handling.

This also exposes a supply chain dimension that many teams underestimate. External model hubs, shared internal registries, and even artifacts passed between teams can become distribution channels for malicious models. A poisoned model uploaded to a trusted repository—or swapped during a brief compromise window—can propagate quickly across environments that automatically sync or cache dependencies. In that scenario, a single malicious artifact can lead to multiple downstream compromises.

Defensively, the first principle is to stop treating model files as trusted by default. They must be handled as untrusted input with strict controls:

- Hardened deserialization: Enforce strict schema validation, disable unsafe loaders, and avoid dynamic execution paths during parsing.

- Sandboxed loading: Load and validate models in isolated environments (containers with minimal privileges, no host access, constrained syscalls) before promotion to production.

- Provenance and integrity: Require cryptographic signing of model artifacts, verify checksums, and pin versions. Prefer curated registries over ad hoc downloads.

- Runtime isolation: Separate inference services from sensitive infrastructure; minimize access to secrets and internal networks.

- Behavioral monitoring: Detect anomalous activity during model load (unexpected file access, process spawning, network egress) and at inference time (sudden config changes, output manipulation patterns).

- Least-privilege execution: Ensure the service account running the model has only the permissions it absolutely needs—no broad filesystem or network rights.

There is also a process implication. Security review must extend to the ML lifecycle: model sourcing, validation, promotion, and deployment. CI/CD pipelines that automatically pull and deploy models need guardrails—approval gates, artifact scanning, and isolation stages—before anything reaches production.

The broader takeaway is that AI infrastructure is converging with traditional application security in a very concrete way. The same principles—input validation, sandboxing, least privilege, and supply chain integrity—apply, but the “inputs” now include complex binary artifacts that can carry executable intent.

Model files are no longer passive. They are part of the execution surface. Treating them otherwise is what turns a loading step into a compromise event.